Member-only story

Databricks Feature Store

Feature Stores are being used for few years now to manage machine learning data/features. Google’s Feast, an open source feature store or Uber’s Michelangelo, its very own machine learning platform which has a feature data management layer, often fascinate other companies to either implement or buy a centralize feature storage service but, they often get lost in implementation complexities or budget constraints. Along with that, as enterprises are more and more embracing managed Spark services like Databricks, often it becomes challenging to integrate the managed Spark environment with a feature store implementation.

Recently (at the time of this writing) Databricks has introduced an in-built feature storage & management layer in its workspace. This is going to help the users looking for a ready installed feature store to try out. In this blog, we’ll discuss the very basics of features and see how we can leverage Databricks Feature Store to store, retrieve or lookup features to create training dataset and train a univariate time series model.

What is a Feature?

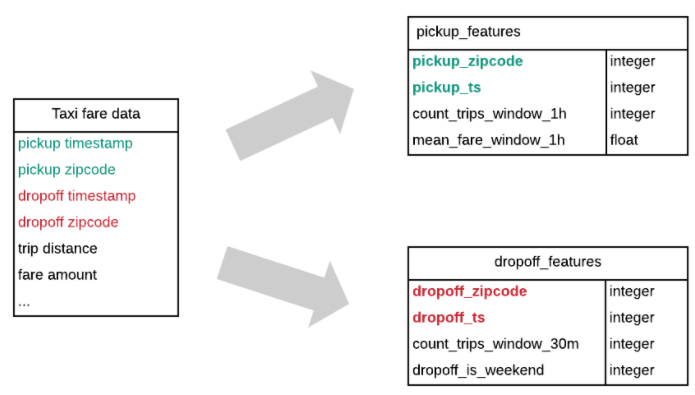

Feature are data attributes — used to develop machine learning training sets. As an example, raw data from NYC Taxi Data can be transformed into following features:

1) Pickup features

Count of trips (time window = 1 hour, sliding window = 15 minutes)

Mean fare amount (time window = 1 hour, sliding window = 15 minutes)2) Drop off features

Count of trips (time window = 30 minutes)

Does trip end on the weekend (custom feature using python code)

Features are curated, often transformed. Same feature can be used by multiple teams to create multiple ML models.

What is a Feature Store?

A feature store is a data storage layer where data scientists and engineers can store, share and discover curated features. This can be of two types:

Offline Store: Contains features for model training and batch inference. Databricks uses Delta table as its offline storage.